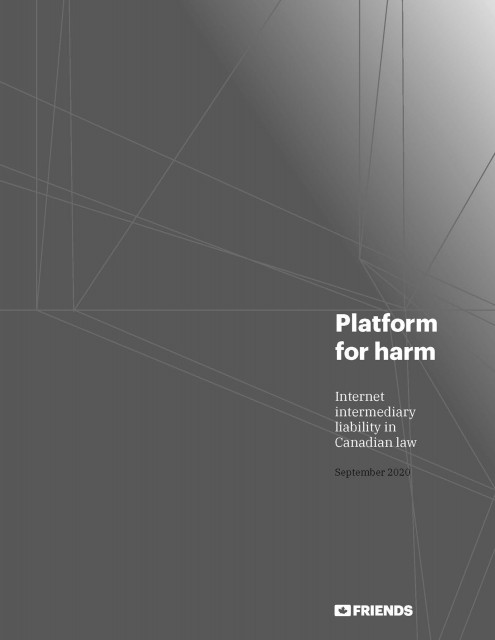

Platform Liability

Platforms like Facebook have become synonymous with hate, propaganda, and other harmful content that would land any other media company in court. Here's how Canada can tackle the problem and protect democracy.

In Canada, media companies like The Toronto Star, The Globe and Mail, or CBC are liable for the content they publish and face severe penalties for publishing harmful, defamatory, or illegal content like hate speech. As a result, these media companies take great care to ensure the content they publish is legal and accurate, and in doing so, they preserve the integrity of democratic debate.

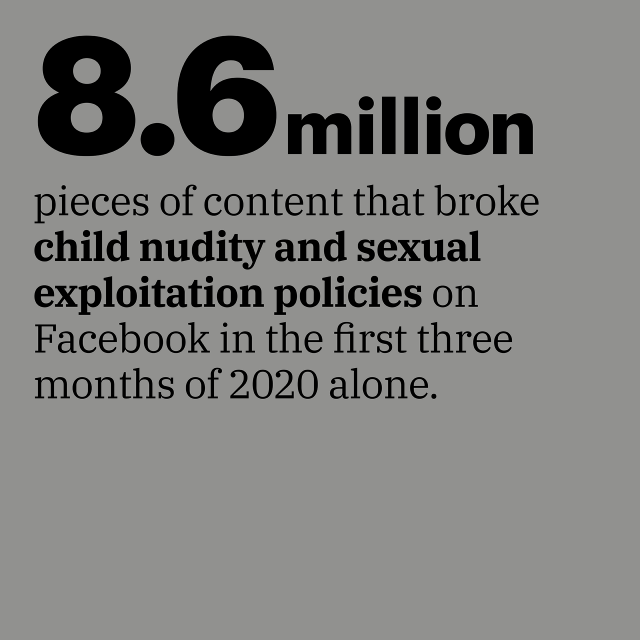

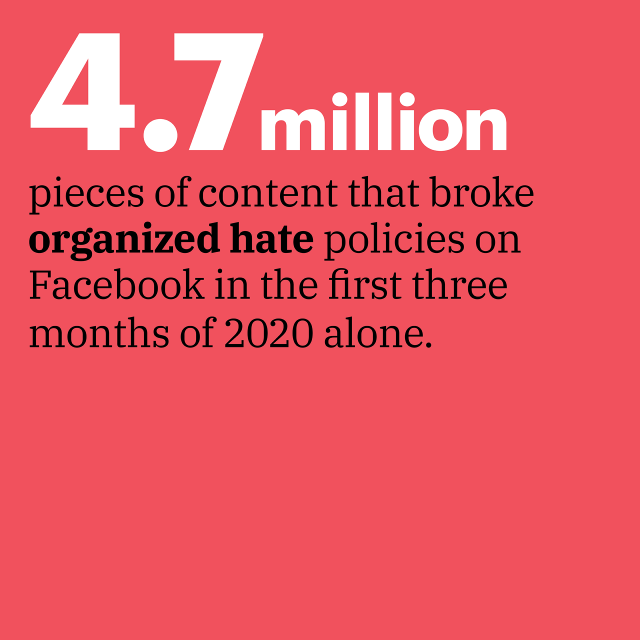

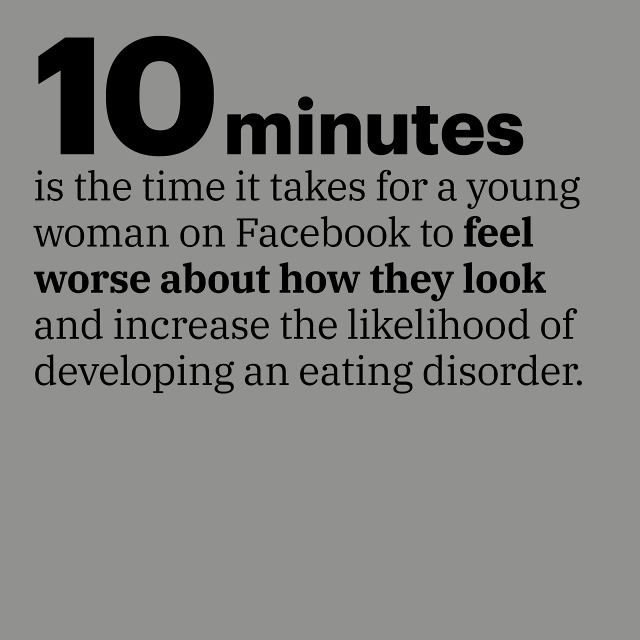

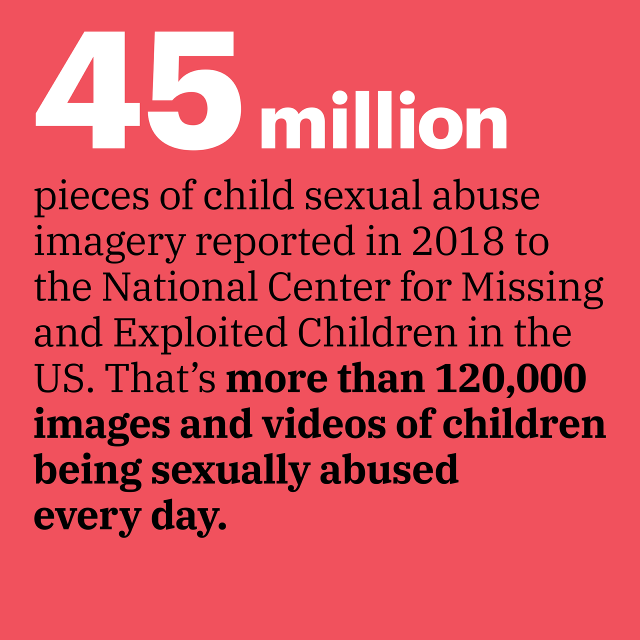

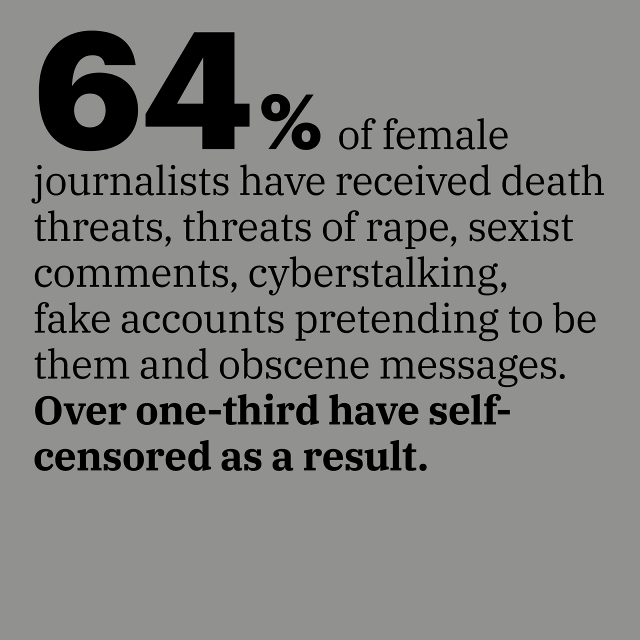

Platforms like Facebook and YouTube that host user-generated content consider themselves not responsible for users' posts, and so far, Canadian police and prosecutors have done little to oppose that argument. This leaves platforms free to publish, amplify, recommend, and make unprecedented sums of money from content that would land most other media companies in court. Examples include child abuse, revenge pornography, incitement to suicide, or worse.

However there is reason to believe that existing Canadian law says that companies like Facebook are responsible for promoting hateful and illegal content.

Under Canadian law, you are considered a publisher (i.e. liable for content) if you know content is likely illegal and publish it anyway, or if you are notified about it after the fact but neglect to take it down.

Companies like Facebook and YouTube meet both of these definitions:

- By their own admission, they can interpret, adjudicate, and remove harmful content before it is ever seen by a human user. This indicates an editorial intervention between the time a user submits a post and the time it becomes visible.

- They have repeatedly been notified of illegal content that they fail to remove, such as the Christchurch mosque massacre video, which was still available on Facebook more than six months after the attack.

If they are in fact publishers under Canadian law, these platforms should be held liable for amplifying and promoting hateful and illegal content.

Steven Guilbeault

Minister of Canadian Heritage

Steven Guilbeault succeeded Pablo Rodriguez as Minister of Canadian Heritage in 2019. Minister Guilbeault's mandate includes the CBC and other critical cultural institutions.

Navdeep Bains

Minster of Innovation, Science and Industry

Navdeep Bains is responsible for the safe and inclusive development of Canada's economy and for creating Canada's Digital Charter

David Lametti

Minister of Justice and Attorney General of Canada

As Minister responsible for the Department of Justice, David Lametti is the government's legal representative

Brenda Lucki

Commissioner of the Royal Canadian Mounted Police

Its most senior member, Brenda Lucki controls and manages Canada's national police service and is responsible for keeping Canadians safe and secure